Load Balancer VS Rate Limiting Comparison to Clear Your Concepts

Introduction

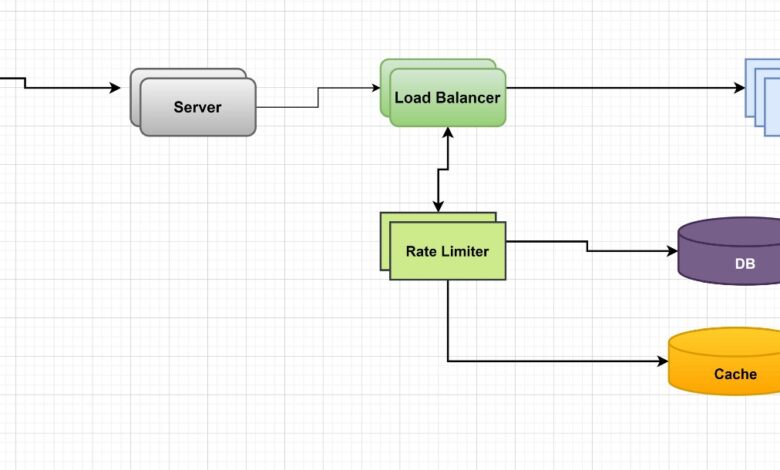

Before we read about Load Balancer VS Rate Limiting. A load balancer is a virtual device that sits between the servers in your infrastructure and the users who access them. It distributes the workload across multiple servers so that no server goes underutilized or over-worked. Load balancing takes care of all requests and does not allow any kind of request (from user or system) to go through to any other host than it’s originally intended for by either source IP address or via URL patterns/rules set up on proxy server which acts as gatekeeper as well as proxy between web applications send HTTP requests through it from client browser & then receiving responses back from backend server(s).

Let’s read more about Load Balancer VS Rate Limiting.

Load Balancing is a way to distribute traffic across multiple servers.

Load balancing is a way to distribute traffic across multiple servers. It is used to increase capacity beyond the capacity of any one server, and in some cases it can even improve speed and reliability through redundancy. Let’s read more about Load Balancer VS Rate Limiting.

Load balancing systems are divided into two main categories: active/passive and active/active (or sometimes called “active/proximal”).

Active-active means that multiple instances of the same service are running on different nodes in a datacenter; each instance uses the bandwidth from its own node, but also shares connections with other instances. This reduces network congestion by allowing each node to use its own network resources more efficiently than if they were all trying to share them with all other nodes at once. Let’s read more about Load Balancer VS Rate Limiting.

The main difference between active/passive systems is that active/passive systems only have one instance running on each node. Active-active systems with multiple instances will use more resources per server and require more hardware to run them effectively than an active/passive system.

This improves speed and increases reliability through redundancy.

Load balancing a cloud application is one of the most important tasks you can perform. It helps to improve performance, availability, and scalability. Let’s read more about Load Balancer VS Rate Limiting.

Load balancing helps:

- Increase speed and reliability through redundancy. The more servers in a cluster, the greater your chance of failure or downtime while they’re down; however, by spreading out traffic across multiple servers you increase their overall availability. This can be used advantageously when you need something done quickly (e-commerce) or with high traffic volume (cloud services).

- Increase capacity through load balancing individual instances rather than entire resources such as CPU power or RAM space (which would require more hardware). You can also use this technique to scale up your virtual machines without having to buy new hardware as well as reduce costs by sharing existing resources instead of buying new ones separately from each other based on what type(s) of workloads each service runs on its own dedicated instance types – which will eliminate wasted money between different types’ needs being fulfilled together instead just sharing them all together at once like before!

- Scalability by creating multiple instances within one physical machine so that any given request sent via HTTP(S)/TCP/UDP etcetera

It is also used to increase capacity beyond the capacity of any one server, and to scale applications in real time with demand.

Load balancing is a way to distribute traffic across multiple servers. This improves speed and reliability through redundancy, but it can also be used to increase capacity beyond the capacity of any one server, and to scale applications in real time with demand. Let’s read more about Load Balancer VS Rate Limiting.

The most common use for load balancers is for websites where many users access them simultaneously—this could be as many people using your website at once or thousands of simultaneous visitors logging into Facebook at once (for example).

The goal is to make sure that each user gets their own copy of your page without having any pages go down due strictly to how much traffic there has been recently! It’s usually done by placing different versions of scripts onto different servers; if one server goes down then another will take over until everything comes back online again quickly enough, so none gets left out behind because nothing else worked right away either.”

Rate Limiting is a way to control traffic sent to your application by defining a limit on the number of requests a consumer can make over a particular span of time.

Rate limiting is a way to control traffic sent to your application by defining a limit on the number of requests a consumer can make over a particular span of time.

Rate limiting is used to prevent clients from overloading your website or web service, protecting your application’s infrastructure from overload and ensuring that all users have equitable access to your service. Let’s read more about Load Balancer VS Rate Limiting.

Load Balancing is a way to distribute traffic across multiple backend servers in order to increase capacity and reliability of your application. For example, you can route incoming requests. Read here more about Load Balancer VS Rate Limiting.

By enforcing this limit, you can prevent clients from overloading your website or web service with excessive requests, thus protecting your application’s infrastructure from overload and ensuring that all users have equitable access to your service.

Rate limiting is a way to control traffic sent to your application. By enforcing this limit, you can prevent clients from overloading your website or web service with excessive requests, thus protecting your application’s infrastructure from overload and ensuring that all users have equitable access to your service. Let’s read more about Load Balancer VS Rate Limiting.

While rate limiting can be used for troubleshooting and debugging purposes, it should not be used as the primary tool for managing traffic on an application because it does not provide any visibility into how individual users are using the system. Let’s read more about Load Balancer VS Rate Limiting.

Although both rate limiting and load balancing help keep websites and services online, they do so in different ways.

Although both rate limiting and load balancing help keep websites and services online, they do so in different ways. Rate limiting is a way to pre vent overload of your application by limiting the number of requests per second (RPS). Load balancing is a way to distribute traffic across multiple servers so that each server can handle its own workload independently. This allows you to scale out your applications without having downtime or losing data due to server failure. Let’s read more about Load Balancer VS Rate Limiting.

Load balancing helps with redundancy, speed, and capacity; rate limiting helps with security and protecting your application from malicious attacks. Let’s read more about Load Balancer VS Rate Limiting.

Conclusion

The key takeaway here is that both of these technologies can help your application run smoother and more efficiently. However, they do it in different ways. A load balancer provides redundancy by distributing traffic across multiple servers, while rate limiting helps control traffic sent to your application by defining a limit on the number of requests a consumer can make over a particular span of time. In this blog post we explored how these two technologies work together—and which one might be better suited for your needs! This was the article about Load Balancer VS Rate Limiting.

Read here more about this website.